Chapter 17 — The Raspberry Pi as AI Node: MQTT Meets the LLM

The consolidation of two years of IoT work: a Raspberry Pi 4 running local AI models, connected to the MQTT broker, able to process sensor data and respond in natural language.

Local LLMs on a Pi

A Raspberry Pi 4 with 8GB RAM can run quantized language models in the 7B parameter range at 1–3 tokens/second. That’s not fast — a response to a sensor query takes 10–30 seconds. For interactive chat, it’s too slow. For autonomous monitoring tasks that run once per hour, it’s fine.

The practical stack:

- Ollama for model serving — one command to download and run a model

- Llama 3.2 3B or Phi-3.5 mini for Pi-compatible size

- Python + requests to interact with the Ollama API

# On the Pi

ollama serve

ollama pull phi3.5

# Test

curl http://localhost:11434/api/generate \

-d '{"model": "phi3.5", "prompt": "Is 22°C a good temperature for a bedroom?"}'The MQTT + AI Pipeline

The architecture: an MQTT subscriber that accumulates sensor readings, packages them as context, and periodically queries the local LLM for anomalies or recommendations.

import paho.mqtt.client as mqtt

import requests

import json

readings = {}

def on_message(client, userdata, msg):

topic = msg.topic

value = float(msg.payload.decode())

readings[topic] = value

# Every 20 new readings, ask the LLM for analysis

if len(readings) % 20 == 0:

context = json.dumps(readings, indent=2)

prompt = f"""

Here are the current sensor readings from my home:

{context}

Are there any values outside normal ranges?

Should I take any action?

"""

resp = requests.post("http://localhost:11434/api/generate",

json={"model": "phi3.5", "prompt": prompt, "stream": False})

analysis = resp.json()["response"]

# Publish the analysis back to MQTT for Home Assistant

client.publish("home/ai/analysis", analysis)

client = mqtt.Client()

client.on_message = on_message

client.connect("localhost", 1883)

client.subscribe("home/sensors/#")

client.loop_forever()The analysis is published back to MQTT. Home Assistant picks it up as a text sensor entity, displays it on a dashboard, and can trigger a notification when the LLM flags something unusual.

sequenceDiagram

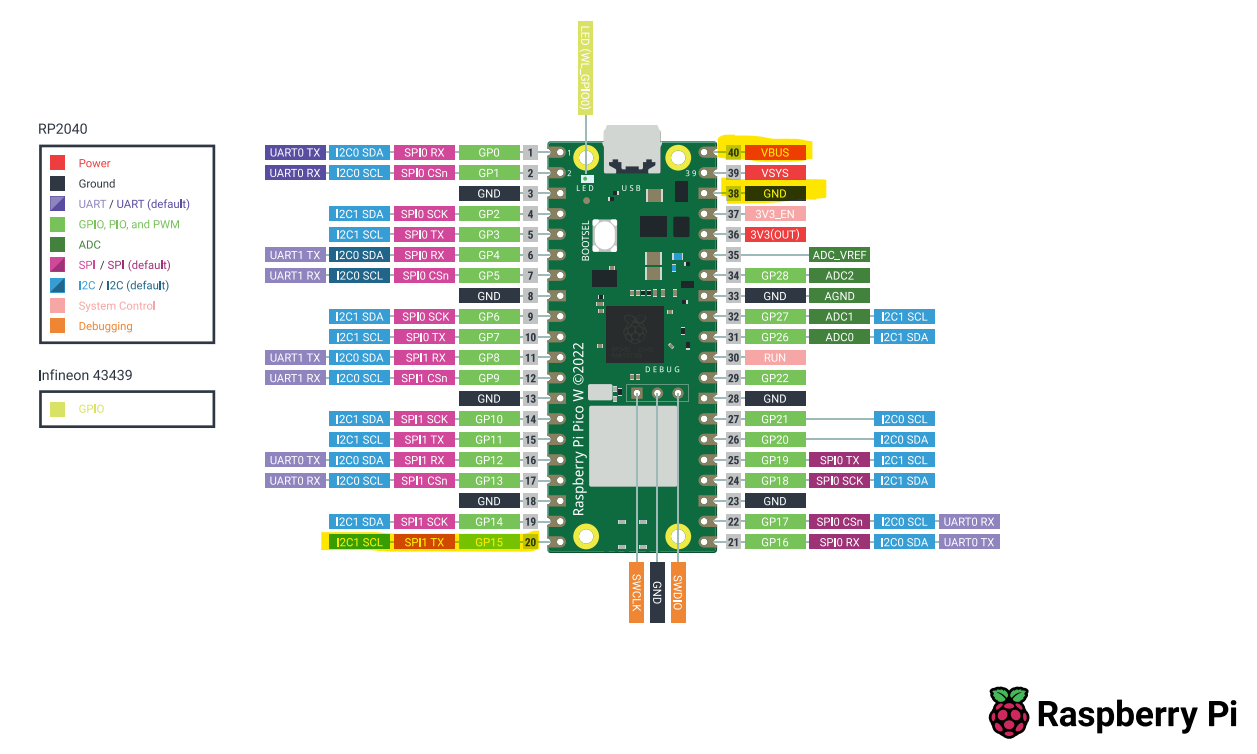

participant Sensor as DHT22 + Pico W

participant Broker as MQTT Broker

participant Py as Python Subscriber

participant Ollama as Ollama (Pi 4)

participant HA as Home Assistant

Sensor->>Broker: publish home/sensors/# (every 30s)

Broker->>Py: deliver readings

Py->>Py: accumulate 20 readings

Py->>Ollama: POST /api/generate\n{context + prompt}

Ollama->>Py: analysis text (10–30s)

Py->>Broker: publish home/ai/analysis

Broker->>HA: deliver analysis text

HA->>HA: display on dashboard

HA-->>HA: trigger notification\nif anomaly detected

The Realistic Expectation

A 3B parameter model running at 1 token/second on a Pi will not replace a data analyst. It will catch obvious patterns (“temperature has been rising steadily for 3 hours”), flag values outside normal ranges (“CO2 is at 1800ppm, which is significantly above typical indoor levels”), and suggest simple actions (“consider opening a window or turning on ventilation”).

That’s useful. Not magic. The value is in turning passive sensor data into a proactive notification system without building complex rule engines manually.

Takeaway: Ollama on a Pi 4 runs quantized 3B models at usable speed for batch analysis. Use MQTT as the bus between sensors, AI analysis, and Home Assistant. Set realistic expectations — local small models are useful, not omniscient.